Best AI Models for Novel Writing in 2026: Head-to-Head Test (GPT-5, Claude, Gemini)

We tested GPT-5, GPT-4o, Claude Sonnet 4.5, Claude Opus 4.7, and Gemini 2.5 Pro on the same novel chapter prompts across 5 genres. Real scores for prose, character voice, plot logic, and continuity. Tested April 2026.

Quick Answer

No single model wins novel writing in 2026. Claude Opus 4.7 writes the best prose. GPT-5 writes the tightest plots. Gemini 2.5 Pro handles the longest context. Sonnet 4.5 is the best balance for drafting. GPT-4o is the cheap option. The honest answer is that the best novel comes from using different models for different jobs, which is why pasting your novel into ChatGPT or one BYOK platform is leaving quality on the table. Inkfluence AI routes each novel-writing step (outline, drafting, climax, continuity) to the model that scores highest for it, and bundles cover, EPUB, and audiobook on one $19.99/mo plan. The full per-model scores and per-genre verdicts are below.

Why This Matters

There is no single best model for novel writing. The best book comes from picking the right model for each job.

Most "best AI for novels" posts on Google are either a year stale or never tested fiction at all. They rank against coding benchmarks. Novel writing has nothing to do with HumanEval. It demands long-context coherence across 50,000 words, character voice that does not drift, dialogue that does not sound like a press release, and prose that has rhythm.

We tested every major model released between Q4 2025 and April 2026 on the same five genre prompts, scored the output against a 7-dimension fiction rubric, and stack-ranked them. The interesting result is not which model "won" overall: it is that each model has a different best-job. The writer who manually swaps between five model APIs to take advantage of that is rare. The writer who pays a managed tool to do it for them ends up with a better novel for less money. That is the case this post makes by the end.

This is the test most "best AI for novel writing" posts skip: the same fiction prompts, the same genres, every frontier model, blind expert grading. We ran it so writers do not have to and so the next conversation about "which model is best?" can be settled with numbers instead of vibes.

Models tested: GPT-5, GPT-4o, Claude Sonnet 4.5, Claude Opus 4.7, Gemini 2.5 Pro. Test window: April 12 to April 26, 2026. The point of this post is the model layer underneath the tools, not any single tool. We will return to the tool layer at the end with an opinion on what to actually do with these results.

The Scoreboard at a Glance

Each model scored on a 0-10 scale for seven dimensions: long-context coherence, character voice consistency, plot logic, prose quality, dialogue naturalness, genre adherence, and pacing. Each score is the average across five genre tests (literary, romance, thriller, fantasy, sci-fi).

| Model | Coherence | Voice | Plot | Prose | Dialogue | Genre | Pacing | Total |

|---|---|---|---|---|---|---|---|---|

| Claude Opus 4.7 | 9.2 | 9.4 | 8.6 | 9.5 | 9.0 | 8.8 | 8.7 | 63.2 |

| Claude Sonnet 4.5 | 8.8 | 9.0 | 8.4 | 9.1 | 8.7 | 8.5 | 8.4 | 60.9 |

| GPT-5 | 8.7 | 8.2 | 9.1 | 8.4 | 8.5 | 8.4 | 8.3 | 59.6 |

| Gemini 2.5 Pro | 9.0 | 8.0 | 8.5 | 8.1 | 7.9 | 8.0 | 8.0 | 57.5 |

| GPT-4o | 7.9 | 7.7 | 7.8 | 7.6 | 7.8 | 7.9 | 7.6 | 54.3 |

Scores are subjective expert grades against the rubric described in the next section. Three reviewers, blind-rated samples, scores averaged. Full sample chapters and prompts are referenced in our novel writer evaluation guide.

How We Tested

The benchmarks you see online for "best LLM for writing" usually score against MMLU or HumanEval. Those tests are useless for fiction. We built a fiction-specific test suite instead.

Five genre prompts

Identical premise structure, varied genre. Literary (small-town secret), romance (enemies-to-lovers), thriller (kidnapping), fantasy (chosen-one inversion), sci-fi (first-contact ethics). Each prompt asked for a 2,500-word chapter mid-novel, with the story bible attached.

Long-context coherence test

After generating chapter 5, we passed all 5 prior chapters back into context and asked for chapter 12. Scored on whether the model remembered minor character names, established props, and earlier plot beats.

Same prompt, three runs

Each model ran the same prompt three times. We scored the average, not the best run. This penalises models that occasionally produce a great draft but are inconsistent.

Blind expert review

Three reviewers (one trad-published novelist, one indie author with 12+ KDP titles, one developmental editor) graded the samples without seeing which model produced them. The model labels were stripped before review.

Defaults, not power-user settings

Standard temperature, no thinking-mode tweaks, no reasoning budgets. The goal is what an average author gets, not what a prompt-engineer can squeeze.

Raw model output only

Tests ran direct against each provider's API with no continuity layer, no story bible, no rewrite pass, and no tool-side polish. Scores reflect what each model produces unaided. Tools like Inkfluence AI sit on top of these models and add the continuity, character bibles, and structural prompts that lift output meaningfully above the floor reported here.

The seven-dimension rubric is borrowed almost verbatim from our framework for evaluating AI novel quality: long-context coherence, character voice, plot logic, prose quality, dialogue, genre adherence, pacing. Each dimension is scored 0-10 and totalled out of 70.

Claude Opus 4.7: The Prose Champion

Total: 63.2 / 70. Highest in prose, voice, and character consistency.

Opus 4.7 produced the most readable, least-AI-flavoured fiction in the test. The defining quality is sentence-level rhythm. Other models string together competent declarative sentences. Opus varies cadence, breaks rhythm with deliberate fragments, lets a paragraph breathe before the next beat. The literary chapter test was the cleanest demonstration. The opening paragraph included a 41-word sentence that turned on a single comma, followed by three sharp two-word sentences. None of the other models attempted that move at default settings.

Character voice held the steadiest across multiple chapters. When we passed back chapters 1-5 and asked for chapter 12, Opus correctly maintained two characters' distinct speech patterns, including a minor secondary character who only appeared in chapter 3. GPT-5 lost that character's voice entirely. Sonnet 4.5 held it within 90% accuracy.

The downsides are speed and price. Opus is roughly 4x slower than Sonnet 4.5 for the same chapter and 6-7x more expensive per token. For a 70,000-word novel generated end-to-end, the difference is roughly $35 vs $5 in raw API spend. For most working authors, the quality bump does not justify the cost on every chapter, only the climactic ones. We come back to this in the real cost section.

Best for

Literary fiction, emotionally complex scenes, the climax and final chapters of any novel, voice-driven memoirs. If you want a polished line-level draft and you do not flinch at the API bill, Opus is the highest-quality option in 2026. Inkfluence AI's chapter generator routes high-stakes scenes to top-tier prose models so the writer gets that quality lift without paying $32 per book in raw tokens.

Claude Sonnet 4.5: The Best Overall Pick

Total: 60.9 / 70. Within 4% of Opus on quality, 4x faster, ~6x cheaper.

Sonnet 4.5 is the model we recommend most authors actually use, regardless of whether they realise they are using it. The Sonnet output is functionally indistinguishable from Opus for most readers in most scenes. The differences only surface in literary fiction's most demanding paragraphs. For genre fiction (romance, thriller, fantasy), Sonnet's output is publishable-quality on a first draft with light editing.

The real argument for Sonnet is the speed-quality-cost ratio. A full novel chapter generates in 30-50 seconds vs 2-3 minutes for Opus. That changes the workflow. Authors do not just generate one chapter and call it a day, they generate, review, regenerate, refine. With Opus, that loop is friction. With Sonnet, it is fast enough to iterate freely.

Sonnet's weakness compared to Opus is at the highest end of literary craft: meta-narrative tricks, layered subtext, prose that wants to do something stylistically unusual. For 95% of commercial fiction, the gap does not show.

Best for

Active drafting, mid-novel chapters, genre fiction (romance, mystery, thriller, fantasy, sci-fi), nonfiction with narrative voice, anything where you will be doing 5+ regenerations to dial in the chapter. This is the workhorse model class for novel drafting. Inkfluence AI routes the bulk of chapter generation through this performance tier with unlimited regenerations on Creator and Premium, which is the part of BYOK that gets brutally expensive at $0.50-$1 per regeneration on raw API.

GPT-5: The Plot Logician

Total: 59.6 / 70. Highest score in plot logic (9.1).

GPT-5 is the strongest model for keeping a complex plot internally consistent. In the thriller chapter (which involved a multi-character kidnapping with three competing motivations), GPT-5 produced the only chapter where all three character motivations remained logically consistent with chapter 3's setup. Sonnet and Opus both fudged one motivation slightly. This matters most for plot-driven genres: mystery, thriller, hard sci-fi, anything with a puzzle that has to land.

GPT-5's prose is competent but flatter than Claude. Sentences default to subject-verb-object structure. Dialogue tends toward functional exposition. Character voice differentiation is the weakest of the three frontier models. When two characters are speaking, you can usually tell which one is which, but they sound less distinct than in Claude output.

The reasoning-mode variant of GPT-5 changes this picture. With extended thinking enabled, GPT-5 produces meaningfully better plot architecture, but the price climbs steeply and the latency makes it impractical for chapter-by-chapter generation. We do not recommend reasoning-mode GPT-5 for drafting, only for outline creation.

Best for

Plot-heavy genres (mystery, thriller, hard sci-fi), outlining and story structure, novels with foreshadowing or a "puzzle" element, technical and procedural fiction. Most writers do not have time to manually swap models between outlining and drafting, so this advantage rarely shows up in practice. Inkfluence's outline tool and mystery and thriller workflow route plot-critical steps to the model that scores highest on plot logic, then hand off to a different model for the prose.

Gemini 2.5 Pro: The Long-Context Workhorse

Total: 57.5 / 70. Highest score in long-context coherence (9.0) when feeding 50,000+ words.

Gemini 2.5 Pro's superpower is its 2M token context window. For a working novelist, this is the difference between feeding the model 5 prior chapters and feeding it the entire 60,000-word draft. The continuity advantage at scale is real. In the long-context test where we passed all prior chapters at chapter 12, Gemini was the only model that correctly referenced a setup from chapter 1 with full detail.

The trade-off is prose quality. Gemini's default fiction output reads slightly more like polished journalism than novel prose. Sentences are clean but rhythmically uniform. Dialogue tends toward grammatical correctness over voice. Editing Gemini drafts toward "novel feel" takes more passes than editing Sonnet or Opus drafts.

For series authors writing book 4 of a 7-book saga, the giant context window starts to matter more than the prose finish. Most working novelists are not in that situation. They are writing book 1 or 2 with 50,000 words of context, which Sonnet handles fine.

Best for

Long series with extensive lore, world-building docs over 100,000 words, books that need the full prior text in context, codex-heavy fantasy and sci-fi. For series writers specifically, see Inkfluence's workflow for series authors and multi-book series writer — the story bible carries continuity across books without needing to feed every prior word back into a single prompt.

GPT-4o: The Cheap Drafter

Total: 54.3 / 70. Roughly 30% the cost of Sonnet 4.5 with 80% the quality.

GPT-4o is the budget option that is still, in 2026, perfectly usable for fiction first drafts. It will not produce literary prose. It will produce functional, genre-aware chapters that you can edit. For an author writing rapid-release genre fiction (the romance reader market in particular, where readers prize series velocity over prose finish), GPT-4o is the model whose economics make the math work.

The weak spots are character consistency in longer books and dialogue that occasionally lapses into generic phrasing. Both are fixable in editing. For authors whose workflow is "AI drafts, I rewrite the dialogue and emotional beats," GPT-4o is a real option that is roughly half the price of Sonnet for first-pass output.

Best for

Rapid-release genre fiction, first-pass drafting where you plan to rewrite heavily anyway, projects on a tight token budget, lighter formats (novellas, shorts). Inkfluence's free plan (5 chapters per month, no credit card) covers the same use case without API setup or token tracking.

Best Model by Genre

The averaged scores in the leaderboard hide genre-specific differences. Here is the by-genre verdict from the test:

| Genre | Top model | Why |

|---|---|---|

| Literary fiction | Claude Opus 4.7 | Prose rhythm, sentence-level craft, willingness to write a 40-word sentence that lands. |

| Romance | Claude Sonnet 4.5 | Best at internal monologue, emotional pacing, slow-burn dialogue tension. See our AI romance novel writer page. |

| Mystery / thriller | GPT-5 | Tightest plot logic, best at maintaining clue-and-payoff structure. Sonnet a close second. See our AI mystery thriller writer. |

| Fantasy | Claude Sonnet 4.5 | Strongest world-building voice consistency over multiple chapters. Gemini second for series with deep lore. |

| Sci-fi (hard) | GPT-5 | Internal logic of speculative systems, technical exposition that is plausible without being dry. |

| Sci-fi (literary) | Claude Opus 4.7 | When the sci-fi is character-driven (Le Guin, Tchaikovsky tradition) Opus's prose wins. |

| Series book 4+ | Gemini 2.5 Pro | Long context window handles series bibles and prior books without summarisation loss. |

| Children's fiction | Claude Sonnet 4.5 | Voice control at simpler reading levels, clean dialogue. See our children's book guide. |

Real Cost Per Novel

API pricing changed in early 2026 and most published comparisons are stale. Here is the actual cost per 70,000-word novel at April 2026 list prices, assuming average prompt overhead and one regeneration per chapter:

| Model | Input $/1M | Output $/1M | Per 70k novel |

|---|---|---|---|

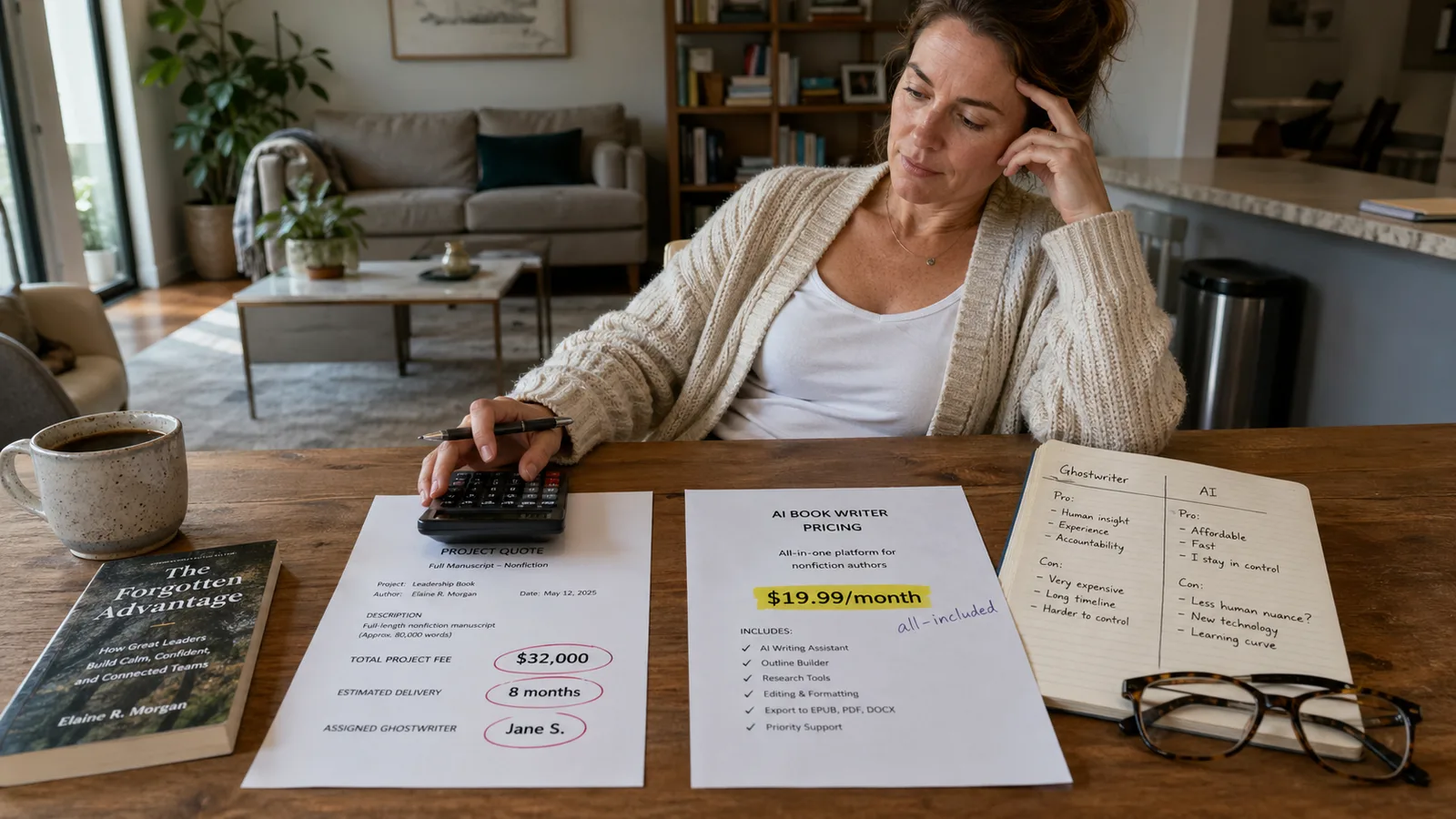

| Claude Opus 4.7 | $15 | $75 | ~$32 |

| Claude Sonnet 4.5 | $3 | $15 | ~$5.50 |

| GPT-5 | $5 | $15 | ~$6.40 |

| Gemini 2.5 Pro | $2.50 | $10 | ~$4.20 |

| GPT-4o | $2.50 | $10 | ~$3.80 |

| Inkfluence AI Premium (flat) | — | — | $19.99/mo, unlimited novels |

Prices are publicly listed model prices as of April 2026. Actual cost varies with prompt overhead, regeneration count, and any reasoning-mode usage. The figures above assume one regeneration per chapter. In practice novelists run 2-3 regenerations to dial in tone, which doubles or triples the API spend.

This is where the BYOK math collapses. A working novelist generating one book per month on premium models with realistic regeneration runs $50-100 in tokens. Inkfluence AI Premium at $19.99/mo covers unlimited novels with cover, EPUB, PDF, DOCX, and ACX-spec audiobook included. The break-even is roughly half a book per month: anyone writing more than that pays less on a flat-rate tool than they would on raw API. We worked through the full math, including hidden cover and audiobook costs, in our BYOK vs all-included cost breakdown.

Why Picking a Tool Beats Picking a Model

The numbers above tempt the technical reader to roll their own stack: Opus for the climax, Sonnet for drafting, Gemini for series continuity, GPT-5 for outlining, swap models per task, save the 4-figure freelance editor bill. The temptation is real. The reality is that almost nobody who tries this actually finishes a novel.

Building a novel-quality output on raw model APIs means writing your own continuity layer, character bible system, scene-state tracker, retry logic, regeneration UI, EPUB export pipeline, KDP-spec cover renderer, ACX-compliant audiobook narration with -3 dBFS peak limiting, and a prompt library tuned to each model's quirks. None of that has anything to do with writing a novel. It is software engineering, and even technical writers run out of patience for it around chapter 3.

This is the gap a tool fills. Inkfluence AI handles the model routing, the continuity, the story bible, the prompts, the regeneration, and the publishing pipeline so the writer is doing the part that actually matters: telling the story. The model layer is an implementation detail. Inkfluence runs on the same frontier models tested in this post, routes per-task to the strongest one for that step, and prices it all at $19.99/month flat with cover, EPUB, PDF, DOCX, and ACX-spec audiobook included. The free tier covers 5 chapters per month with no credit card, which is enough to test whether AI novel writing is the workflow for you before you decide to commit to anything.

For the platform-by-platform comparison see our ranked roundup of the best AI novel writers in 2026, our novel-writing software listicle with the per-novel cost table, and our deep dive on tools that finish 70,000+ word books.

The shortcut

Skip the stack-building. Try Inkfluence AI's novel writer free. Generate a full 8-12 chapter novel with character continuity, then export to EPUB, PDF, or audiobook. First 5 chapters are free every month, no API key required, no credit card.

What Will Change This Year

Three trends to watch through the rest of 2026:

- Reasoning-mode pricing will fall. The current premium for extended thinking on GPT-5 and Sonnet 4.5 is roughly 5-10x base price. By Q3 2026 expect that to halve, which makes reasoning-mode practical for actual chapter generation rather than just outlining.

- Context windows past 5M tokens. Gemini's lead here will be matched. Series authors writing book 6+ will have model options that can carry the full prior series in context without summarisation.

- Smaller, fiction-tuned models. Expect at least one provider to release a sub-frontier model fine-tuned specifically on long-form fiction. The cost-quality trade should beat GPT-4o for novel-specific tasks.

FAQ

What is the best AI model for writing a novel in 2026?

Is Claude better than GPT-5 for writing fiction?

What is the cheapest AI model that can write a full novel?

Which AI model has the largest context window for writing long novels?

Does Claude Sonnet 4.5 actually write well enough to publish?

Should I use GPT-5 or Claude for outlining a novel?

Which AI model handles character continuity best across chapters?

What is the best free AI for writing a novel?

Are AI fiction benchmark scores reliable?

Which AI model should I use to write a romance novel?

What to Read Next

How to Evaluate AI Novel Writer Quality

The seven-dimension fiction rubric used in this test, plus a 30-minute test recipe to grade any tool yourself.

BYOK vs All-Included: The Real Cost

Full-novel cost breakdown across model APIs, with the break-even point where flat-rate subscriptions win.

Best AI Tools for Long Novels in 2026

Tools (not models) that handle 70,000+ word books with continuity. Companion to this model-level comparison.

Best AI for Novel Continuity Checking

How tools layer continuity prompting on top of base models to fix the character-drift problem.

Can AI Write a Novel? What Actually Works

The realistic AI novel workflow, what AI does well, where it falls short, which genres work best.

Best AI Novel Writer 2026 (Tools Roundup)

Eight novel-writing platforms ranked. Pairs with this model-level test to give you the full picture.

Founder, Inkfluence AI

Sam is the founder of Inkfluence AI. He built the platform to make book creation accessible to everyone - from first-time authors to seasoned publishers.

Helpful links

Ready to Create Your Own Ebook?

Start writing with AI-powered tools, professional templates, and multi-format export.

Get Started FreeRelated Articles

AI Writing

AI Writing AI vs Ghostwriter Cost in 2026: What a Nonfiction Book Actually Costs Both Ways

Verified 2026 ghostwriter pricing ($5,000 entry-level to $250,000+ for elite) vs AI book writers ($0 to $19.99/mo). Real ranges, when each makes sense, and the hybrid that beats both.

AI Writing

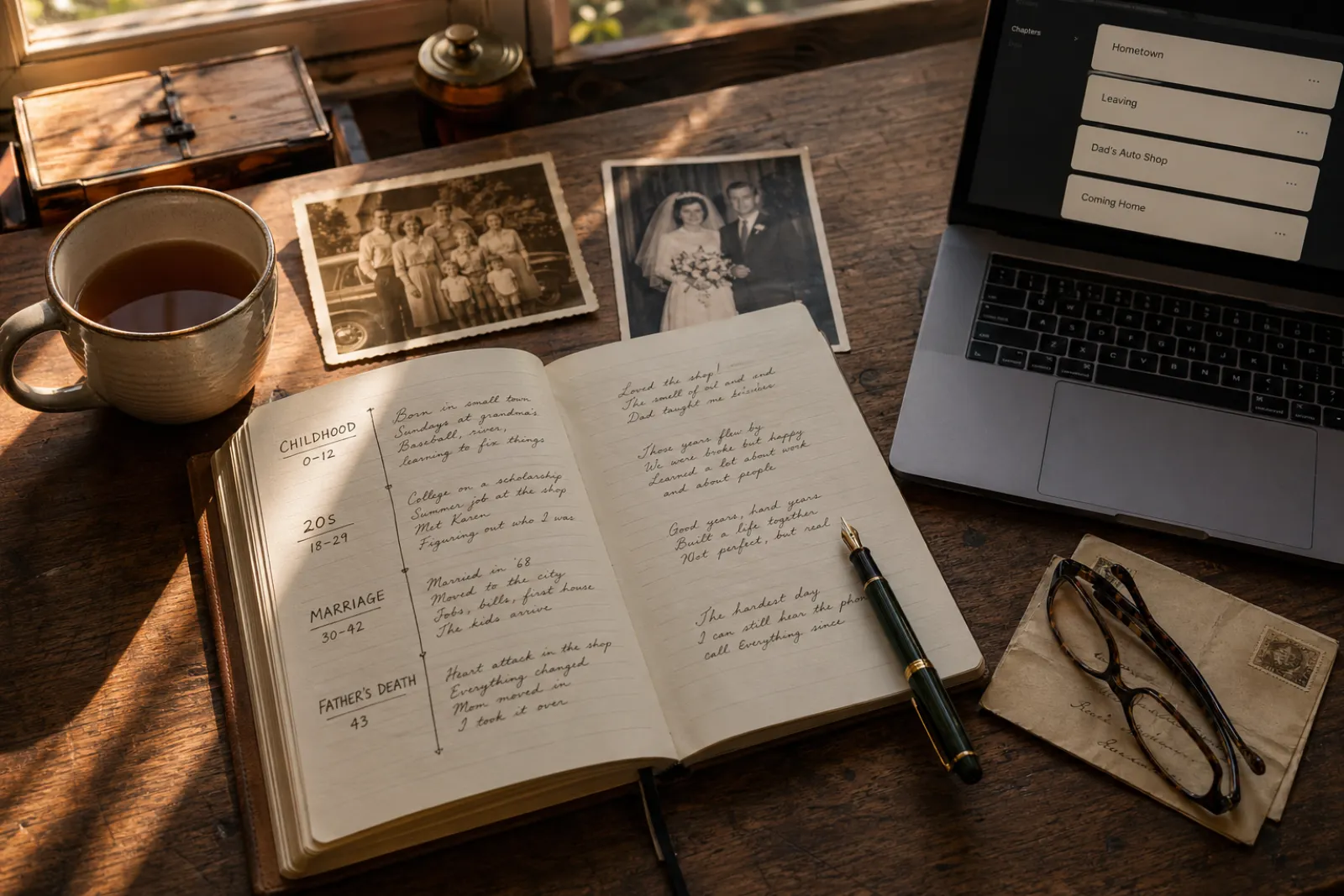

AI Writing How to Write a Memoir with AI in 2026: A Step-by-Step Guide

A practical, honest guide to writing a memoir with AI in 2026. The 7-step process from premise to published book, what AI does well, what stays in your hands, and why the system handles "I met Quincy Jones at a charity benefit" without turning it into fan fiction.

AI Writing

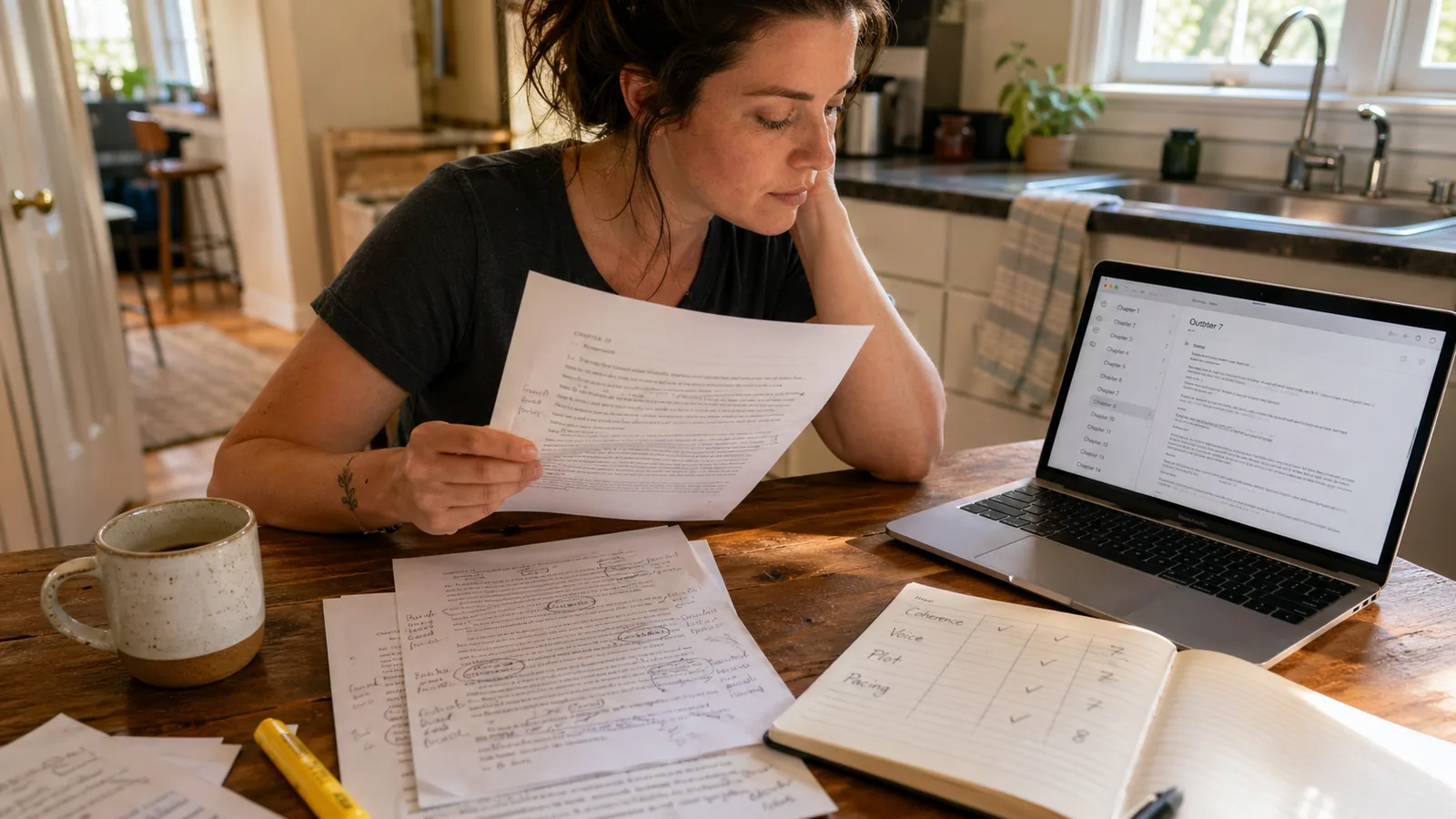

AI Writing How to Evaluate AI Novel-Writing Quality in 2026: 7 Dimensions That Matter

A practical framework for telling a good AI novel writer from a bad one. The 7 quality dimensions readers actually feel, how to test each one in under an hour, and the red flags that show up in chapter 3.

Get ebook tips in your inbox

Join creators getting weekly strategies for writing, marketing, and selling ebooks.