How to Evaluate AI Novel-Writing Quality in 2026: 7 Dimensions That Matter

A practical framework for telling a good AI novel writer from a bad one. The 7 quality dimensions readers actually feel, how to test each one in under an hour, and the red flags that show up in chapter 3.

Quick Answer

A good AI novel writer is judged on 7 dimensions readers actually feel: long-context coherence, character voice consistency, plot logic and payoff, prose voice, tone calibration, show-versus-tell balance, and pacing across acts. The cheap test is to generate three chapters and read chapter 3 first. If it knows what happened in chapters 1 and 2, names the right characters, keeps voice steady, and lands a beat instead of summarising one, the system clears the bar. If chapter 3 reads like a fresh start with new descriptions of the same people, you have a tool that drafts but does not write a novel.

Why This Matters

Most "best AI novel writer" lists rank features. Readers do not feel features.

Chapter counts, export formats, and integrations matter for choosing a workflow. They do not matter for whether a reader finishes your book. What readers feel is whether the protagonist sounds like the same person on page 240 as page 12, whether the antagonist's threat builds or just repeats, and whether the ending earned the setup.

Those are quality dimensions. They survive feature releases, model upgrades, and pricing changes. Evaluate AI novel writers on these instead, and you will pick a tool that produces a book worth publishing rather than one with the longest comparison table.

If you generate three chapters with any AI novel writer in 2026, the surface always looks fine. Sentences are grammatical. Paragraphs flow. Dialogue uses quotation marks correctly. The bad ones do not fail at the sentence level. They fail at the book level, three chapters in, when the protagonist's eye colour changes or the antagonist quietly stops mattering.

Telling those tools apart from the good ones means knowing what to look for. This guide is the framework we use internally when shipping novel-writing features, and what we recommend authors use when comparing tools. It is not a vendor review. It is a quality rubric you can apply to any AI novel writer in under an hour.

The Mental Model: Process Beats Prose

Every AI novel writer in 2026 can produce a competent paragraph. The single sentence test, where you ask the tool to write a tense moment in a thriller, is useless because every tool clears it. The interesting question is what happens when the same tool has to remember what was tense an hour ago, why it was tense, and whose threat created it.

That is a process question, not a prose question. The tools that produce publishable novels in 2026 are the ones whose underlying process feeds the right context into each new chapter generation. The tools that produce fluent-but-disposable drafts treat each chapter as a one-shot prompt and lose the thread.

Hold this in mind through the rest of the guide. When you read about character voice consistency, you are really asking: does the system know who is speaking, and what their voice was last time? When you read about plot logic, you are asking: does the system know what was set up, and is it being paid off? Quality is downstream of process. The seven dimensions below are how you measure whether the process is working.

The 7 Quality Dimensions

1. Long-context coherence

Coherence is the test of whether chapter N is consistent with chapters 1 through N-1. Names, descriptions, established facts, prior events, and previously revealed information should all hold steady. The classic failure: the protagonist's hometown is Portland in chapter 4 and Seattle in chapter 12. The reader pulls out of the book.

Long-context coherence is the most foundational quality dimension because every other dimension depends on it. A character cannot have a consistent voice if the system has forgotten who the character is. A plot cannot have a payoff if the setup has been overwritten. Test this first.

You measure it by reading two chapters that are five or more chapters apart and looking for contradictions. Hair colour, age, profession, named relationships, named locations, named objects. Coherent tools never contradict themselves on these. Incoherent tools contradict themselves within the same book.

2. Character voice consistency

Voice consistency is the harder, more interesting cousin of coherence. The system can know that the protagonist is named Alex without knowing how Alex talks. A clipped, sardonic ex-detective should not deliver three pages of warm, exclamation-mark-heavy dialogue in chapter 18 just because the AI got drifty.

This is where weak novel writers most obviously fail. Dialogue homogenises. Every character sounds like an articulate, neutral, slightly polite version of the AI's default voice. By chapter 10, the cynical mentor and the optimistic apprentice are indistinguishable.

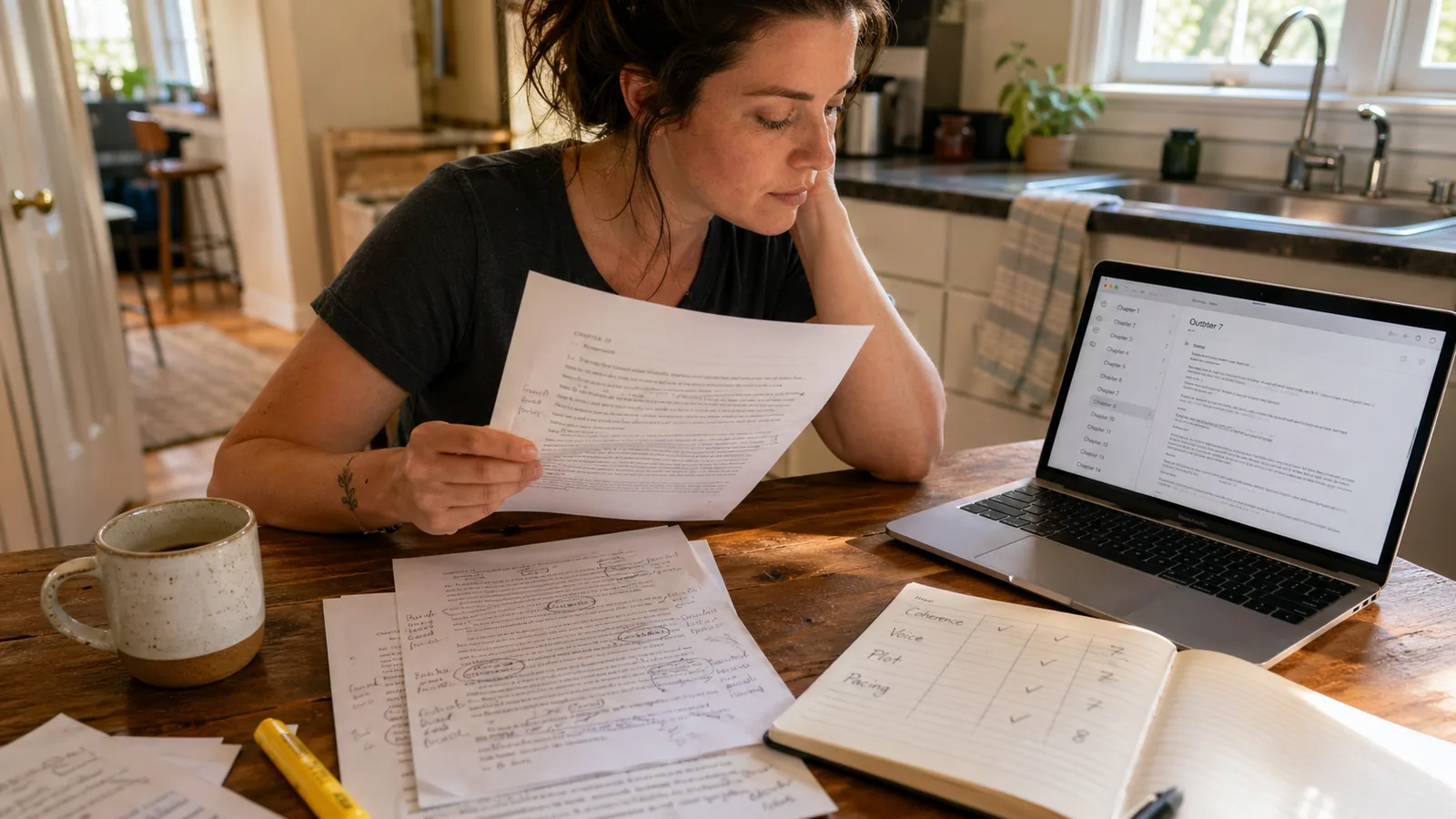

Test this by reading dialogue from three different characters across multiple chapters with the speaker tags removed. If you can guess who is speaking, the tool clears the bar. If everyone sounds like the same narrator, it does not. Tools that maintain a story bible or character cards usually win this dimension. Tools that do not usually fail it. Read more in our guide to AI novel continuity checking.

3. Plot logic and payoff

Plot logic is whether what is set up gets paid off. A gun introduced in chapter 2 should be fired by chapter 30, or its absence should be a deliberate writing choice rather than a forgotten thread. Subplots should resolve. Foreshadowing should land. The antagonist should escalate, not vanish.

Most AI novel writers can hit this for short books, around 30,000 words, where the entire plot fits inside a single context window. They struggle on novels of 70,000+ words because the early setup falls out of the model's working memory by the time the late chapters are being generated. The result is a book that reads competently chapter by chapter and frustratingly when read whole.

Test plot logic by writing one sentence for each chapter that summarises what was set up and what was paid off, then reading the list end to end. Setups without payoffs are a red flag. Payoffs without setups are worse. Tools that pre-allocate plot beats at outline time, and feed those beats into the chapter generation prompt, tend to clear this dimension. Tools that generate chapters without an outline reference do not.

4. Prose voice

Prose voice is the texture of the writing itself. Is it boring, corporate, generic? Is it overwrought and purple? Or does it have the kind of distinctive grain a reader settles into within a few pages?

Generic AI prose has a recognisable flatness. Sentence rhythms are uniform, often around 12 to 18 words. Vocabulary is competent but unremarkable. Adjectives stack predictably (gentle smile, cold steel, soft light). Metaphors are the safest available. Reading three pages feels like reading a competent first-year creative writing student who has read a lot of bestsellers but written nothing personal.

Distinctive prose breaks the pattern. Sentences vary from three words to forty. Vocabulary picks the precise word over the safe one. Metaphors are concrete and specific to the scene. Test this by reading aloud. Generic prose sounds smooth and forgettable. Distinctive prose has texture. The acceptance threshold is honestly: you remember a line a day later. For more on stripping the corporate-bestseller flatness, see how to avoid generic AI book output.

5. Tone calibration

Tone is whether the writing sounds like the genre it claims to be. A cosy mystery should not read like a forensic procedural. A literary character study should not have the action-beat rhythm of an airport thriller. A YA romance should not deploy the vocabulary of a literary memoir.

Tone failures happen in two ways. Some tools default to one house tone (usually a competent mid-list-thriller voice) and apply it regardless of genre. Other tools recognise the genre at the outline stage but lose calibration during chapter generation, drifting back to the default voice by chapter 5.

Test tone by generating the first 1,000 words of a book in three different genres (literary, cosy mystery, fantasy) and reading them side by side. Each should feel like its genre, not like the same AI voice with different costumes. Tools that route different genres through different prompt frameworks tend to win this dimension. Tools that use one universal fiction prompt usually do not.

6. Show-versus-tell balance

Show-versus-tell is the oldest writing-craft rule and the one AI most reliably violates. Telling is summary: "Sarah was angry." Showing is dramatisation: "Sarah's hand tightened around the mug until her knuckles went white." Good fiction uses both. Bad AI fiction uses telling for almost everything because telling is shorter and lower-risk.

The failure mode looks like this. The system generates a chapter where dramatic events happen, but they are reported rather than enacted. The protagonist remembers an argument instead of having one. A chase happens off-page. The climactic confrontation gets a paragraph of summary instead of three pages of beat-by-beat tension. The reader feels they are being told a story rather than experiencing one.

Test this by counting telling sentences in any randomly selected chapter. A telling sentence summarises emotion, action, or character without a sensory or behavioural detail. If more than 30% of the chapter is telling sentences, the system has a show-tell calibration problem.

7. Pacing across acts

Pacing is whether the book has structure. Most novels follow a three-act or four-part shape that goes back to Save the Cat for screenwriters and Aristotle for everyone else: setup, escalation, midpoint reversal, dark night, climax, resolution. Each phase has a different rhythm. Setup is patient. Escalation is brisk. The midpoint hits hard. The dark night is slow and heavy. The climax accelerates. The resolution exhales.

Weak AI novel writers produce books with no pacing variation. Every chapter has the same rhythm. The midpoint reversal is just another scene. The climax is just another chapter. The reader finishes feeling the book was the same intensity throughout, and they cannot remember the high point because there was not one.

Test pacing by reading three chapters: chapter 1, the chapter at the structural midpoint (chapter 12 of 24, for instance), and the climactic chapter near the end. If they feel different in tempo, weight, and emotional register, the system understands pacing. If they feel interchangeable, it does not. Tools with explicit act structure baked into the outline pass usually clear this dimension. Tools that treat outlines as a flat chapter list usually fail it.

How to Test Each Dimension in 30 Minutes

You do not need to write a full novel to evaluate a tool. Here is the 30-minute recipe.

- Generate an outline for a 24-chapter novel in a specific genre (cosy mystery and literary character study give the best signal because they have distinct tones).

- Generate chapter 1, chapter 12, and chapter 24. Skip the middle. You are testing whether the system can hold structure end to end, not whether it can write filler.

- Read chapter 12 first. Note: do you understand who the characters are, what is happening, why it matters? If yes, the system is feeding context. If no, it is doing one-shot generation.

- Read chapter 1. Compare characters and setup. Has the system stayed consistent? Have the named characters and stakes survived?

- Read chapter 24. Did the setup pay off? Did the antagonist matter? Did the protagonist arc resolve? Or did it just stop?

- Run the dialogue test. Pull three speeches by three different named characters. Strip the tags. Can you guess who is who?

- Run the show-tell count. In any one chapter, count summary sentences versus dramatised sentences. Below 30% summary is good.

- Read the prose aloud for one page. Does anything stick? If nothing does, prose voice is generic.

Eight steps, around 30 to 40 minutes. You will know if the tool is worth your novel before you have committed your novel to it. For a different angle on the same evaluation, see our breakdown of what AI can and cannot do for novel writing.

Red Flags That Show Up by Chapter 3

Some failure patterns are diagnostic. If you see any of these in the first three chapters of a generated novel, the tool will not improve over 24 chapters. It will get worse.

Re-introducing characters in chapter 3

If the system describes the protagonist's appearance again in chapter 3 as if introducing them, it is generating without the previous chapters in context. Coherence will collapse by chapter 8.

Generic settings in a specific genre

A cosy mystery set in a "small town" with no named streets, no named shops, no named neighbours is a tone failure. Genre-shaped systems fill in genre-specific texture automatically.

Dialogue homogenisation

If three different characters all use the same sentence rhythms and vocabulary in chapter 3, voice consistency is broken at the system level, not just the chapter level.

Episodic structure

If chapter 3 could be moved to chapter 8 without anyone noticing, the system is generating standalone episodes rather than a connected plot. Pacing will be flat across the whole book.

Summary climaxes

If a dramatic moment in chapter 3 is reported in two sentences ("They fought, and Sarah won.") instead of dramatised, the show-tell calibration is broken. The actual climax in chapter 22 will be just as flat.

Repeated phrasing

If you notice the same metaphor or stock phrase across chapters ("eyes like deep pools"), the prose voice is generic and the system is reaching for safe language. It will not improve.

What System Features Make These Dimensions Work

You can mostly ignore vendor model claims. Whether a tool runs on a frontier model or a fast model is less important than how the surrounding system uses that model. Here are the system-level features that move the dimensions, in order of impact.

Story bible or character cards

A persistent record of named characters, places, and rules that the system feeds into every chapter generation. Without one, every chapter is generated against the chapter outline alone, and the system has no way to know who the characters are. With one, voice consistency, coherence, and tone are all materially better.

Outline-aware chapter generation

The system reads the outline, knows what chapter this is in the structural arc, and adjusts pacing accordingly. Setup chapters are paced differently from climax chapters. Outline-blind systems generate every chapter as if it were a generic middle chapter, which is why they have flat pacing.

Previous-chapter context injection

The system feeds the actual text of the previous one to three chapters into the prompt for the next chapter. This is the single biggest determinant of long-context coherence in fiction. Tools that do this maintain continuity. Tools that do not lose threads at chapter 5.

Genre-specific prompt routing

Different genres get different instruction sets. A cosy mystery prompt scaffold is different from a literary character study prompt scaffold. Tools that recognise genre at outline time and route accordingly produce on-tone output. Tools that use one universal "write a chapter" prompt produce drift.

Show-tell calibration in the prompt

The strongest fiction prompts explicitly tell the underlying model to dramatise rather than summarise key moments, and to vary sentence rhythm. Without this, the default model behaviour is to summarise (it is shorter and easier).

None of this requires a frontier model. We have seen on the inside what a well-built fiction system can do with a fast lightweight model and what a poorly-built one does with the most expensive model on the market. The system matters more than the engine. Authors building a long-running novel workflow should optimise for the system, not the model claims. Read more on this in our guide to AI tools for long novels.

A Simple Scoring Sheet

If you want to formalise the evaluation, score each dimension out of 5 after reading three chapters. Total out of 35. Decision rule:

| Score | Reading | Recommendation |

|---|---|---|

| 28-35 | Strong on all dimensions, distinctive prose | Commit to a full novel here. |

| 21-27 | Solid coherence and structure, prose competent | Use it. Edit prose voice in pass 2. |

| 14-20 | Drafting tool, not a novel-finishing tool | Useful for outlines and ideation, not full books. |

| Under 14 | Surface-level fluency, structural breakdown | Pass. The output will need a full rewrite. |

Most tools in 2026 score in the 14 to 20 band. Tools purpose-built for novels (with outline scaffolding, story bibles, and previous-chapter context) score 21 to 27. The 28+ tier requires both the system features above and careful editing-pass prompts. Inkfluence AI's novel-writing flow is built around the 21+ band by design, with the dimensions above as the explicit quality target.

Where AI Novel Quality Sits in 2026

The honest baseline. AI-assisted fiction in 2026 produces novels that read as competent genre fiction with thorough editing. The best of it is indistinguishable from mid-list traditionally-written commercial fiction. The worst of it is fluent, structurally weak, voice-flat material that will not hold a reader past chapter 4. The gap between best and worst is enormous, and it is mostly explained by the system features in the previous section, not by which underlying model anyone is using.

The Society of Authors position on AI in fiction is sceptical of replacement and supportive of co-writing. That is the right framing for 2026. The author is the editor, the voice, the originality. The AI is the speed and structural scaffolding. A good AI novel writer makes the second part faster without forcing the author to compromise on the first. A bad one makes the second part fast and the first part impossible.

Test on the seven dimensions. Pick the tool that clears them. Then put the editing hours in.

Frequently Asked Questions

What is the most important quality dimension for AI novel writing?

Does the underlying AI model matter for novel writing quality?

How long does it take to evaluate an AI novel writer?

What is the difference between AI novel coherence and AI novel quality?

Why does AI fiction often fail at show-versus-tell?

Can AI write a novel that scores high on all seven dimensions without human editing?

Which genres are easiest for AI novel writers to handle well?

Should I evaluate AI novel writers using my own book idea or a generic test idea?

What to Read Next

Best AI Models for Novel Writing 2026 (Head-to-Head)

GPT-5, Claude Opus 4.7, Sonnet 4.5, Gemini 2.5 Pro, GPT-4o tested against this exact rubric.

Can AI Write a Novel? What Actually Works in 2026

An honest look at what AI can and cannot do for full-length fiction.

Best AI for Novel Continuity Checking

5 tools compared for keeping characters, plots, and timelines consistent.

Best AI Tools for Long Novels in 2026

Which tools actually finish 70,000+ word novels without losing the thread.

How to Avoid Generic AI Book Output

Practical techniques for making AI-generated writing sound unique and personal.

Founder, Inkfluence AI

Sam is the founder of Inkfluence AI. He built the platform to make book creation accessible to everyone - from first-time authors to seasoned publishers.

Helpful links

Ready to Create Your Own Ebook?

Start writing with AI-powered tools, professional templates, and multi-format export.

Get Started FreeRelated Articles

AI Writing

AI Writing Best AI Models for Novel Writing in 2026: Head-to-Head Test (GPT-5, Claude, Gemini)

We tested GPT-5, GPT-4o, Claude Sonnet 4.5, Claude Opus 4.7, and Gemini 2.5 Pro on the same novel chapter prompts across 5 genres. Real scores for prose, character voice, plot logic, and continuity. Tested April 2026.

AI Writing

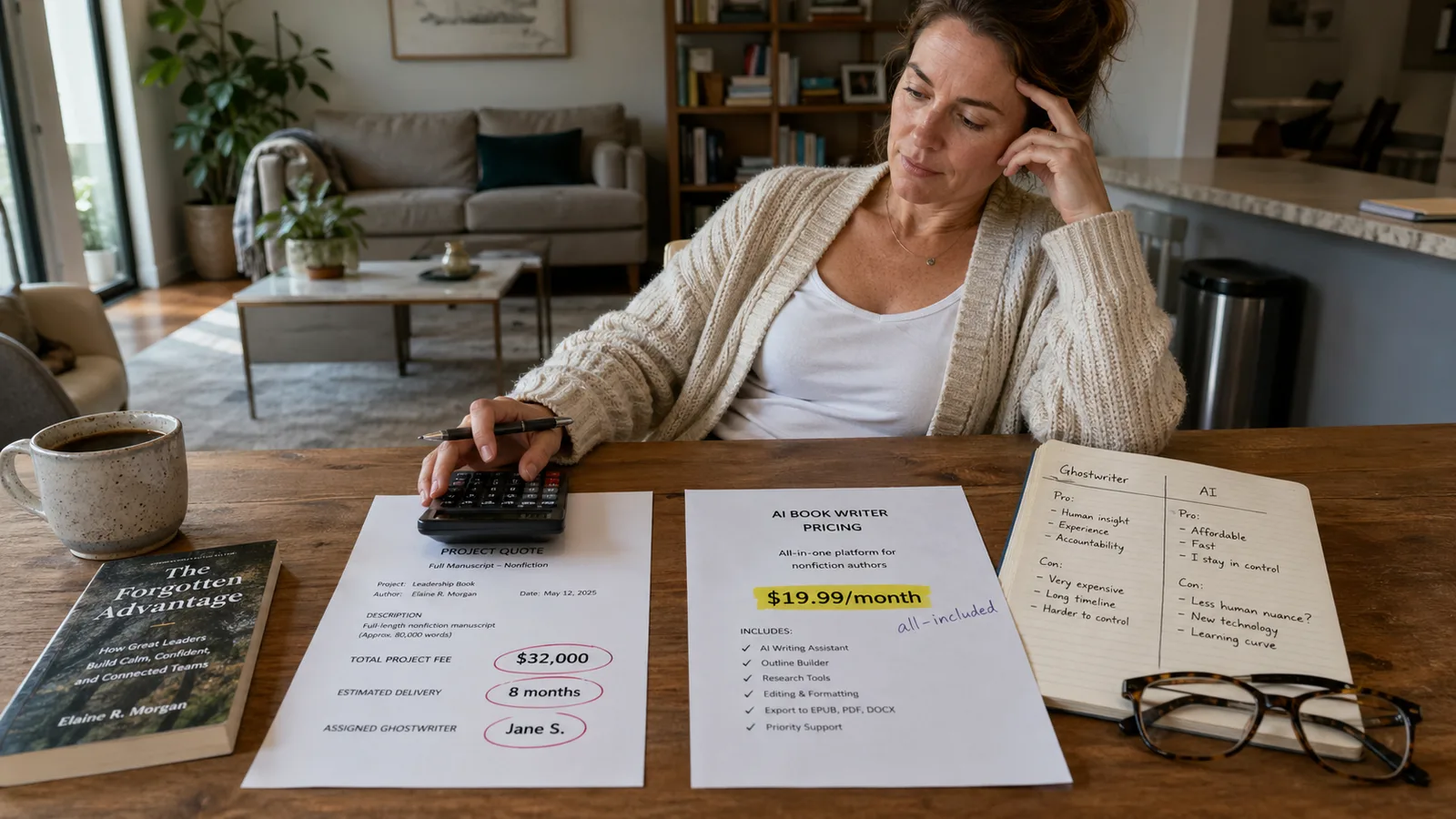

AI Writing AI vs Ghostwriter Cost in 2026: What a Nonfiction Book Actually Costs Both Ways

Verified 2026 ghostwriter pricing ($5,000 entry-level to $250,000+ for elite) vs AI book writers ($0 to $19.99/mo). Real ranges, when each makes sense, and the hybrid that beats both.

AI Writing

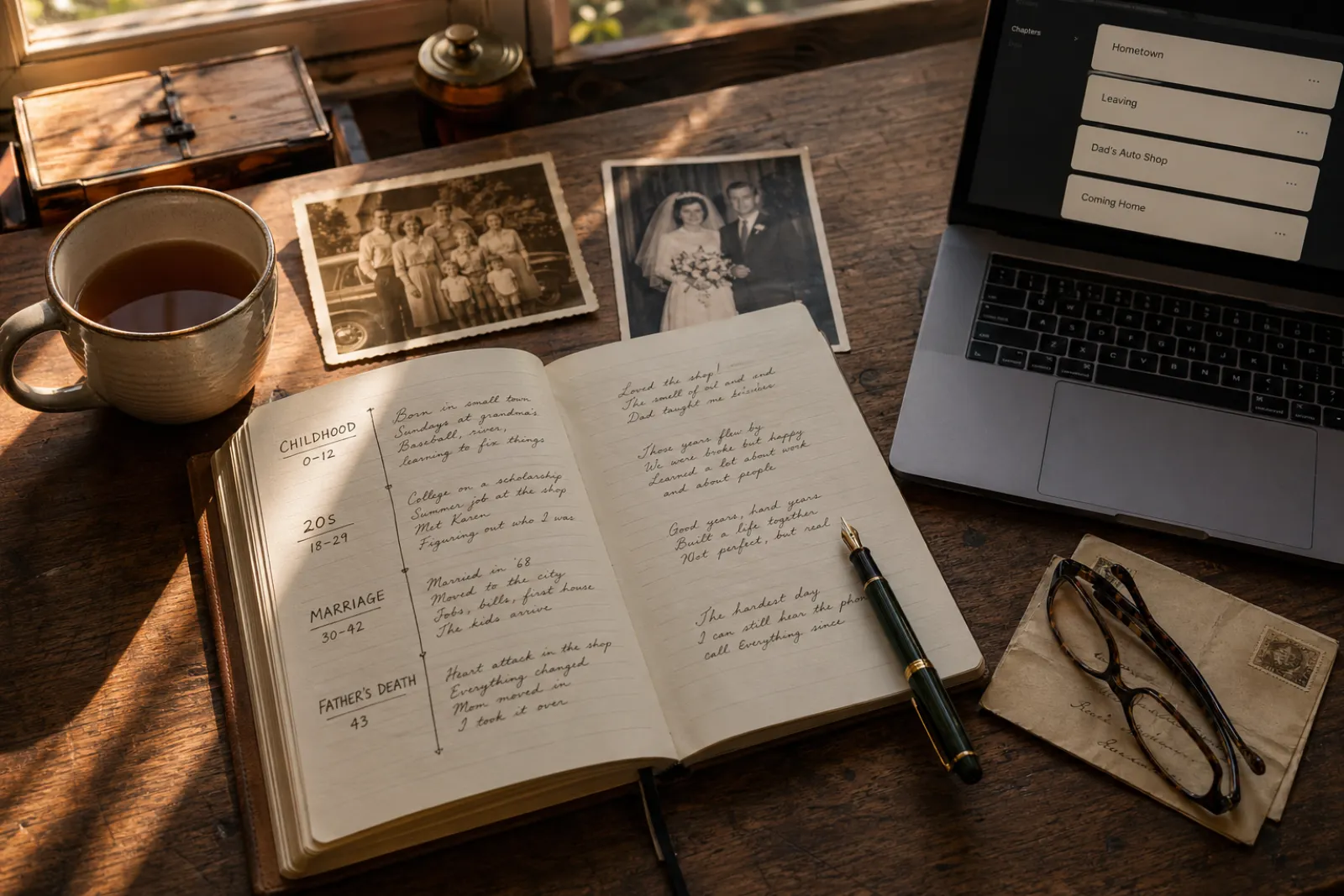

AI Writing How to Write a Memoir with AI in 2026: A Step-by-Step Guide

A practical, honest guide to writing a memoir with AI in 2026. The 7-step process from premise to published book, what AI does well, what stays in your hands, and why the system handles "I met Quincy Jones at a charity benefit" without turning it into fan fiction.

Get ebook tips in your inbox

Join creators getting weekly strategies for writing, marketing, and selling ebooks.